week of april 10th

April 6th

today I continued working on the machine learning model. Over the weekend I got the program to work when using the model the tutorial provided. Now all I have to do it convert my model to the .onnx format then I should be able to create the windows app. Though the conversion of file formats is the part that I amd having the most trouble with. Once I take all the possible steps that I can think of to solve the issue I am planning on posting the issue on stackoverflow to get help from the community over there.

weekly summary

N/A only one class

week of april 3rd

March 29th,

Today I continued working on the machine learning model. I have decided to switch to making a desktop app instead of an ios app. I discovered a tutorial on winML that teaches me how to create an app that have machine learning capibilities.

March 31st,

today I worked on getting the vitural reailty headsets to work. I got the headsets to connect to the computer the controls were not working. I got some help from Alex but we still couldnt figure it out.

April 2nd,

today I got the VR headsets to work. the issue was that the controlers were mixed. I dont even know how I mades such a basic mistake but I think it was because the picture on the desktop was very confusing. From the right angle either of them could look right. As for the Oculus go I charged it then connect to it using my phone but I dont think we have the right controler so I couldnt do anything with that headset. afterwards I worked on converting my Machine learning model.

Weekly summary

this week I changed the outcome of my project. I also got the VR headset to work. Overall I think it was a decently productive week.

week of march 27th

Monday march 22nd,

today I continue to try and convert the .h5 file into .mlmodel. This time i tries setting up a virtual enviorment in anaconda and running it in there. Though still not working.

Wensday march 24th,

Today I tried copying all the liberary version that the guy in the tutorial used and starting over from scratch. though I soon found out that the version of coremltools that he is using cannot being installed on my computer. I tried the commands pip install coremltools==3.2 and conda install coremltools==3.2 but both did not work. but it says the the only avlible version is 4.1

weekly summary

This week i was once again swarthed by the conversion of .h5 to .mlmodel. I am start to run out of things that I can change. I might have to rotate my focus to create a desktop application that can interpret my machine learning model intead of a IOS app.

week of march 12th

Monday March 8th,

today I worked on trying to get the .h5 file to convert to .mlmodel file type (this is the machine learning type that iso uses), but I continue to have issues. I tried changing the version of keras that I am using to convert the model but it still refuses to work. after looking though solutions on stackoverflow I think there may be a mismatch bewteen the version of keras of that I am using to train the model and the version of keras that I am using to convert the model.

Wensday March 10th,

today I retrained the model using a different version of tensorflow and keras and the same version of keras to convert the model but still it is not working.

Weekly summary

this week I encountered a major road block that I was not expecting. I though that the hardest part of the project was going to be train the model but that is actually not the case. If I have begin looking into geting the application to work of other platforms like windows or android rather that ios.

week of march 6th

March 1st, Monday

Today I ran the neural net for hiragana image recognition model in google colab. It worked a lot better than any of my previous attempts reaching a accuracy of 98ish percent. but it was kind of slow and took about 20 minutes to train.

March 3rd, Wednesday

today I tried to convert the hiragana model from the .h5 file format to the .mlmodel format. However I kept on getting errors that said that "the layer was not interable". I'm not really sure what is going on.

Summary

this week i made major progress finally getting the model to train. I think more than how to do machine learning I am learning how to troubleshoot. As soon as I get this model to work I can repeat the process for the katakana and kanji models.

week of Feb 27th

Feb 23rd

yesterday I scheduled my road test for march first at 8:30AM. I really hope that I will pass this test and get my license. If i do then "the studnet has surpassed the teacher" because Cade does not have his full or even provisional license yet. Today in class I got anaconda working and installed tensorflow in a virtual enviorment with the correnct version of python. To do this I used the command "pip install tensorflow". Though the computer when through the procsess of installing the library when I ran the file I recieved the error "tesorflow not found." I am pretty confused and do not know why it is not working.

Feb 25th

today I took a differet approch to getting tensorflow to work. This time in uninstall python on the comupter then reinstalled the later version(3.7) so that it was tensorflow compatible. Though I recived the same error that tensorflow could not be found. When I tried typing in "import tensorflow as tf" directly into the terminal I recived and error that I think means that there was something wrong with the GPU. I tried going though some online solutions to solve them but none worked.

weekly summary

This week was kinda depressing because I want to finally get the model to work but the computer simply isnt finding the tensorflow libary. Next class I plan on importing the files that were returned by the earlier part of the code to my google drive then running the neural network in google colab. after I get the trained neural network file I will save it to the computer then run the rest of the code using the notepad++ and terminal method.

week of Feb 20th weblogs

Wensday Feb. 17th

Today I decided to migrate my efforts on the machine learn app to new computer. This time, instead of using google colab, I am using the terminal and notepad++. Andy helped me set it up and we worked together to troubleshoot errors with missing libaries and other things. Though I got the part of the code the repackages the data into numpy arrays working, I ran into issues on the part of the code where the the images are modified to be the right shape and split into training and test data.

Friday Feb. 19th

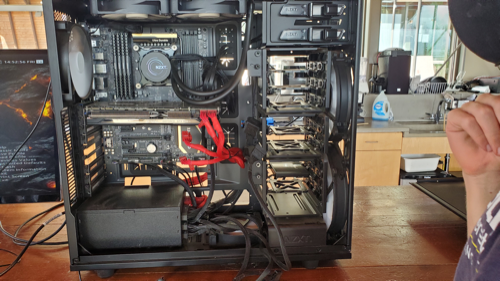

Today I ran into issues getting the computer to start. Thus the rest of the class was spent trying to help Will and Nick fix the fans on the computer.

By the end of class everything was fixed. I stayed alittle bit after class to try working on the program alittle more. I got the part of the code the failed before to work this time. Next the part of the code is to implement will be the actual neural network. Though as I tried to import the tesorflow library I found out that the version of python that I have is actaually "too new" for tensorflow to support. So then I figured out that I had to install anaconda so I can create a virtual enviorment where I can run an old version of python. I had trouble using powershell to get the hash value for the anaconda installation and this is about where I stopped.

Weekly summary

This week I made the switch from google colab (a machine learning platform that operates almost exculsively out of the cloud) to the text editor and terminal method. There are several pros and cons to making the switch. On one hand google colab allows me to work from any computer but on the other hand it is very inconsistant and slow. With the alternative method I get much more consistant results and can get more help from Andy and Will since they are more familiar with this method.

Feb. 13th

Tuesday, February 9th

Today I worked on trying to get the MatPlotLib rendering of the dataset to work. I got help from Will but despite our combine efforts it did not work out.

Thursday February 11th

Today i spent the class cleaning my laptop using the Ifix it kit and some canned air. I found some lose magnets inside the computer so I spent some time putting them back into place. Now when I close or shake my computer there is no noise.

Weekly summary

This week i had alot of issues with google colab. On the first run though of the code the matplotlib would produce images of the data being used, but on subsequent run thoughs of that same code block it would fail without changing anything. I suspect it has something to do with how google colab compiles code. Also it took an unessarly long time to run the code. I am thinking of switching to a different method.

Weblogs Feb. 6th

Tuesday Feb. 2nd,

today I didnt get much done because my computer died and the charger that I found wasnt powerful enough. though I did set up a workspace on a computer in the montiering lab that I can work on in case something like this happens again.

Friday Feb 5th,

practice driving and read manual in preperation for my test on the 18th. Still working on improving the accuracy of my machine learning model.

weekly summary

This week I didnt get much done with regards to my machine learning project for various reason. Next week my goal is to get a accuracy that is similar to that of the guy in the TowardsDataScience article that I am referancing.

Weblogs Jan 30th

Monday, Jan. 25

Today I help out Anuhea and Alec with the solar panel analysis. we used the mango server to look at the effeciency and total power output of the panels.

Wensday, Jan 27

Today I pitched my project to Dr. Bill. As I talked about in the last weblog my project is making an app that uses machine learning to identify the japanese characters. I worked on how to upload the data to the google colab notebook that I am using. Before I was trying to upload the files invidually to the server using the files.upload() command but this proves too time consuming and I would have to redo it each time I open the notebook. Instead I connected the colab notebook to my google drive using the drive.mount() command. From there the code could properly acess the files.

Friday, Jan 29th

Today I worked on training the katakana model. However I have alot of trouble getting the model's accurcy to imporve. I also when the model reached about the 8th epoch each time I got and error because the computer wanted to "Restoring model weights from the end of the best epoch." I am still working and resolving these errors.

weekly summary

after talking with Dr. Bill I have looking into the possibility to using this technology to teach people new to japanese how to write Japanese. I think this is totatlly possible. Another thing I was thinking of doing was having the model learn as a people new to japanese learned. Though this is something I am not sure how to do.

Weblogs Jan. 23

Tuesday Jan. 19th-

today most of the class was spent talking with Dr. Bill. At the end of class I look into some of the prospective projects and worked one a machine learning model in tensorflow.

Thursday Jan 21st-

I decided today that I would help out with the solar panel stuff. Though i spent most of my time working on how to use the function model.evalute() to test my machine learning model. It seems that the data that I was giving the model didnt have the right shape but i didnt know how to change the shape.

weekly summary-

After thinking about the best project for this semester this weekend I figured out what I want to do. I want to make an app that helps me with my japanese home work. How this app would work is that you would draw the japanese character on the phone screen using your finger then the phone would use machine learning to find the various definitions of the character. After doing some reasearch I should a article on TowardDataScience.com where there is a indepth guide on how to build said app. Though I will be using mostly using his code in my project, my contribution will be the following:

- trying different layer stuctures

- using different activation functions (the part of a model that makes neural networks non-linear)

- trying different optimizers (How the model assesses failure)

- using different regulaiztion techniques (How to keep the model from overfitting)

- expirimenting with different data augmention techniques (kind of like adding water to lemon concentrate to make lemonade)

- adding fetures to the app

- impelmenting my app in my japanese class

weblog interview

Here's the link for my video:

Thanks!

Dec. 5 weblogs

Nov 30th,

today worked on my machine learning project. since I have not made as musch progress on my former project as I would have hope Im am now just focusing on making a simple classification model.

Dec 2nd,

Today I spent all class talking story.

Dec 4th

today I practiced on the driving simulator. specifically I practiced driving curvy roads.

Weekly summary:

this week I have redirected the direction of my project. I want to have something to show during our final presentation so I am just redireting my efforts towards a different easier project.

Nov 28 weekly

Tuesday, Nov 24

Today I worked on finishing up the machine learning course. I am now looking into where to start with my next project. Because not all the the information for instagram statistics is avalible, I am worried that my model may nor workout very well. As a result, I am planning to make the best model I can in a short amount of time then move on to a more feasable project.

weekly summary

this week we only had one class so I really didnt get that much done. But this weekend I drove around a parking lot with my mom practicing parking and turning. It was really healpful having all the practice on the dirivng simulatior.

Nov 20 weekly

Tuesday, Nov 17

Today I sepent most of my time on the driving simulator. I learned how to park and turn around properly. I also expiremented with different kinds of cars to get the feel for them.

Friday, Nov 20

Today I spent most of my time workign on finishing the machine learning crash course. I learned about different metrics to measure a model. Accurcy = # correct predicitions/ # total predictions. Recall = # positive predicitons/ # correct predictions. Precision = # correct positive predictions/ #positive predictions.

weekly summary

this week I learned alot about machine learning and made it through the bulk of the course. I think that what I learned about accuracy, precision, and recall will be very useful as I continue in my project. Accurcy, while it many sound like a good metric, is often misleading. For instance, if you had a model that predicited wheather a person had a very rare diease and if it guessed all negative then its accuracy woudl be pretty good. But it would be an essentially useless model. Precision and Recall are better metrics but are in conflict with each other. when the precision raises the recall falls.

Nov 14 weekly

Monday, Nov 9th

Today I practiced driving on the simulator.

Wensday, Nov 11th

Today I split my time between doing my machine learning course and practicing driving. Most of the class I working on the machine learning stuff. Now I am using a new driving simulator that will better represent how I will actually be driving.

Friday, Nov 13th

I spent most of my time today practing on the driving simulator. Since we are using this new program, a lot of the time was spent working out technical issues with the simulator.

Saturday, Nov 14th

Weekly summary

This week I spent most of my time on the driving simulator. Though I did some machine learning during class, the amount of time that I spent in class was not enough. Going forward I will probable start devoting more time to my machine learning project.

Nov 7 weekly

This week I spent most of my time at ISR on the driving simulator. I think this experience will be very useful when I get my license in January. In my free time I have also been working on the machine learning crash course. Going forward I would like to strike a balance between spending time on the driving simulator and spending time working on my machine learning project.

Nov 5 daily

Now that the driving simulator is set up and installed on the new computer, I practiced driving. I am slowly learning how and when to shift gears.

Nov 3 daily

Today I helped Cade set up the driving simulation. I thought it would be a really cool idea to learn to drive manual on a simulator where I can make many mistakes without severe repercussions.